Sinapt: Two Products, Not One

Slept on yesterday's Sinapt brainstorm. Woke up with one sharp correction: it's not one product, it's two. The knowledge-base infrastructure ships first; the cockpit is a future opinionated layer on top.

There's a Serbian saying: "Пусти да преспава" — let the idea sleep overnight. Don't decide impulsively. Let one night pass while your conscious mind is somewhere else. The real digestion happens while you're not watching.

I published the first Sinapt piece yesterday — the consolidation of a year of AI-native practice plus the brainstorm of a single application that would close the loop. Then I went to bed thinking about it. Dreamt about it. Woke up with a single sharp realization the writing yesterday almost surfaced but didn't quite name.

Sinapt is not one product. It's two.

This piece is the morning-after correction.

Two products, not one

Re-read yesterday's piece through the lens of which problem does each part solve, and the seam between two products becomes obvious in retrospect:

- Problem 1: Knowledge base sharing across teams, machines, and cloud compute is unsolved. The cockpit pattern keeps the KB outside the code repo, which means it's mine alone — no path to a teammate's machine, no path to a cloud agent's context.

- Problem 2: Cognitive load from too many cockpits. The operator now juggles four-plus apps and is the integration layer.

Yesterday's article proposed a single product — Sinapt — that solved both. Sleeping on it surfaced a different read: these are two problems with different shapes, different audiences, different timelines, and very different complexity profiles. They should be two products. And one of them should come first. Decisively first.

Product 1 — Sinapt (the knowledge base)

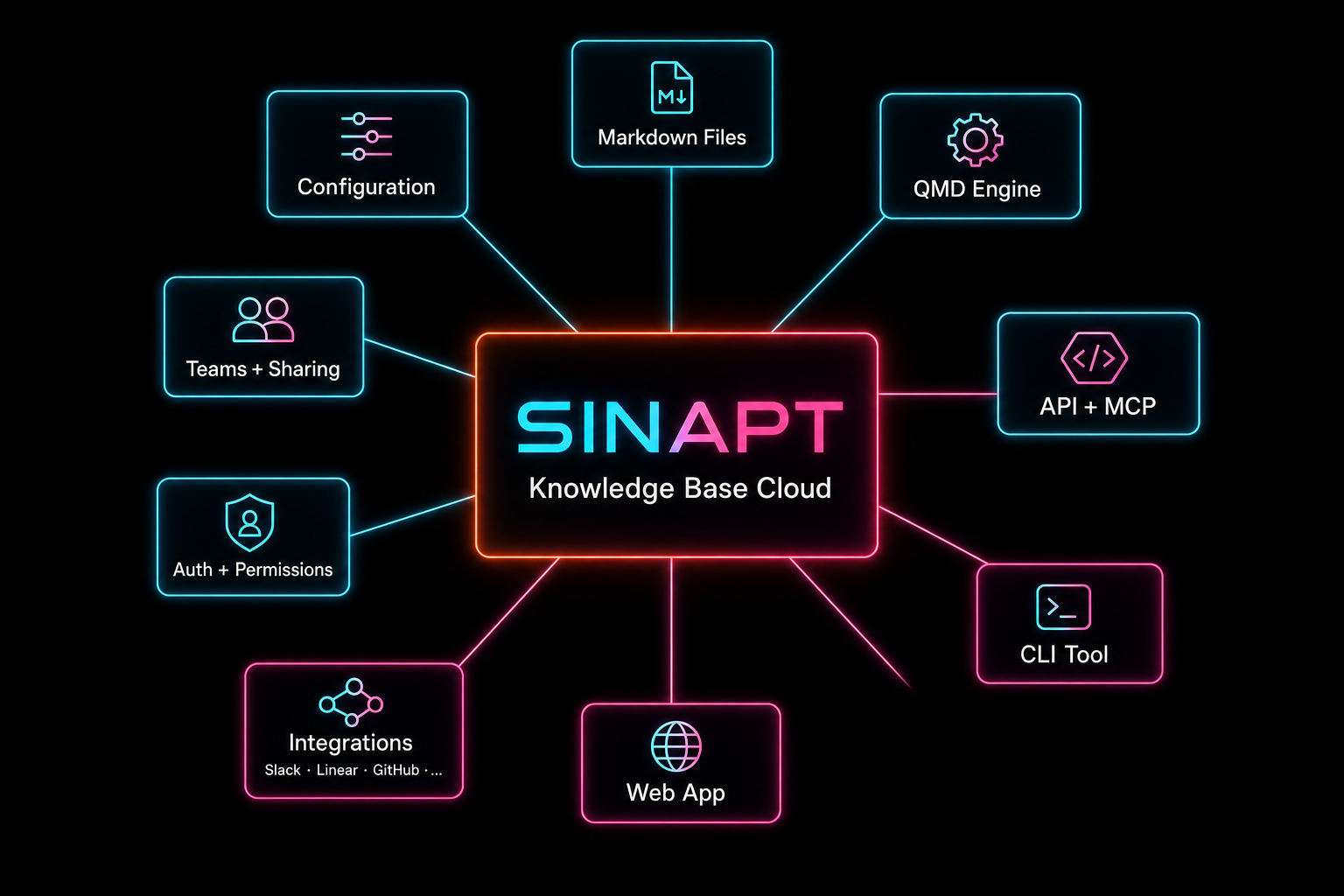

Cloud-native knowledge-base infrastructure for AI-native engineering. Markdown files, hybrid lexical + vector search via QMD, team scoping, integrations, web app for manual curation, full API + MCP surface, CLI for terminal-native workflows. Universally useful — your existing local cockpit pattern keeps working, just with the KB layer moved to the cloud and shareable. Not opinionated about which agents you use. Not opinionated about which editor you use. Not opinionated about your workflow.

This becomes the core. Build this first.

Product 2 — Sinapt Cockpit (the future opinionated layer)

The desktop + mobile application that integrates Claude Code CLI, Codex CLI, the editor, the scratch buffer, and your project view in one window. The collapse-the-complexity move from yesterday's piece — operator burden of pre-AI, same power as today.

Three things make this the second product, not the first:

- It depends on Product 1. The cockpit's value comes from being one window over the cloud KB + integrations + team scoping. Without the KB layer in the cloud, the cockpit is just another desktop app over local files — no improvement on what already exists.

- It's deeply opinionated. People have their own editors, their own terminal preferences, their own agent setups, their own muscle memory. Asking them to switch a workflow they've spent a year tuning is a much harder sale than asking them to add a cloud KB their existing workflow can talk to.

- It's far more complex to build. Native cross-platform desktop + mobile, plus integrating with Claude Code CLI and Codex CLI as embedded processes, plus replicating the scratch-prompt + editor + GitHub surfaces — that's a 6-12 month build. The KB infrastructure is a 2-3 month proof-of-concept.

Build the cockpit later, as a layered product on top of the KB. Build the KB now.

Sinapt — the knowledge base, deeper

Here's the architectural shape. Sinapt-the-product is a hub with a knowledge-base core surrounded by the layers that make it useful to a team or a company.

Walking the spokes:

Markdown files — the substrate

Same shape as the cockpit pattern from The Knowledge Base That Builds Itself: short overview.md entry points + deeper documents linked from there. Cloud-stored (S3 or equivalent), versioned, agent-curated. Files stay text — no opaque vector-store-only formats. Your KB is still readable and grep-able even if Sinapt disappears tomorrow.

QMD engine — the search layer

The hybrid lexical + vector search engine documented in The Context Wall, but running as a managed cloud service instead of a local daemon. Indexes the markdown files automatically (cloud equivalent of the post-commit hook), serves both BM25 and embedding queries, returns ranked chunks. Same query interface as today — your existing prompts and skills don't need to change.

Auth + Permissions + Teams + Sharing

The layer that makes a knowledge base usable by more than one person. Per-collection visibility (private to me / shared with a team / public to the org / mixable). Per-collection permissions (read / write / curate). Team invitations, role assignments, audit log. The thing that's missing entirely from the local cockpit pattern.

Integrations — the ingestion side

Slack threads, Confluence pages, Linear tickets, Jira issues, GitHub PRs/discussions, Notion docs, Google Docs — pull them into the KB on a schedule or on demand. Each integration emits markdown into the right collection with the right metadata. The KB becomes the canonical place where the team's distributed context converges.

Web application — the curation surface

A clean web UI for the operator who isn't currently in their terminal. Browse collections, search, view a document, edit a document, share a document, add a teammate, configure permissions, set up integrations. Not the primary interface for daily use — that stays in the terminal — but the right surface for setup, occasional review, and collaborative editing.

API + MCP — the programmatic surface

REST API for everything. MCP (Model Context Protocol) servers for native AI-agent consumption — Claude Code, Codex, future agents all see Sinapt as a standard MCP-attached knowledge source. The same backend powers the web app, the CLI, third-party integrations, and your own custom tools.

CLI tool — the workflow-preserving surface

This is the critical piece. sinapt CLI replaces the local QMD daemon for users who already run the cockpit pattern locally. Reconfigure your CLAUDE.md + skills + git hooks to call sinapt query instead of qmd query, and your entire existing workflow keeps working — but now your KB lives in the cloud, syncs to teammates, and is accessible to remote agents. Zero workflow disruption. That's the adoption hook.

Configuration — the cross-machine substrate

Account, credentials, integration tokens, default collections, search preferences — all stored in the cloud, follow the operator across machines. Switch laptops, log in, everything is there. Onboard a teammate, they get the team's shared KB instantly.

Proof of Concept first, MVP second

The temptation with a clear product vision is to jump to MVP. Don't. There's a meaningful step before that.

Proof of Concept answers: can this even work? Build the absolute minimum infrastructure that proves the core architecture is feasible. Markdown files in cloud storage, QMD running as a managed service, a thin API in front of both, a CLI client that talks to the API, and exactly enough auth to sign in. No integrations. No web app. No teams. No sharing. No billing. Just: can a single user point their existing CLAUDE.md at a cloud-resident KB and have the same workflow keep working transparently?

If the answer is yes — and the latency, cost, and reliability are acceptable — the architecture is validated. If the answer is no, you learn it on a 2-3 week build instead of a 4-month one.

MVP is the next phase: take the validated PoC and build it into a real product. Web app for setup and curation. Team support. Multiple integrations. Per-collection permissions. Billing. Onboarding flow. Documentation. Support. The thing a real team can adopt and pay for.

This is the same staging discipline I used in Spec-Driven Agentic Development and KISS Your AI Workflow: prove the smallest possible thing first, then expand. The temptation to skip the PoC step is exactly proportional to the cost of being wrong on the architecture. Architecture mistakes at MVP scale are 10× more expensive to fix than at PoC scale.

sinapt.ai — AI tools for developers, engineers, and teams

sinapt.ai becomes the home for the company, not just the product. Positioning: AI-native infrastructure for engineering teams. Sinapt the knowledge base is the first product, and the first thing the site sells. The cockpit is the second product on the roadmap. Future tools and services in the same orbit (eval infrastructure, agent observability, team workflow analytics) live under the same brand and brand promise.

This framing matters because it's the difference between "a knowledge-base tool" and "the company that builds the infrastructure layer for AI-native engineering teams." The first is a feature comparison. The second is a category. Categories command attention and pricing power that features cannot.

It also gives the cockpit (Product 2) a natural home when its time comes — same brand, same audience, same positioning, just a different surface of the same underlying infrastructure.

Where this lands

Yesterday's piece introduced an idea. This piece refines it. The next one — when there's something to show — will be the proof-of-concept demo and the lessons from actually building it.

If you want to follow how an idea evolves from words to code in real time, this is the cleanest place to watch it happen. Subscribe.

Related Reading

- Sinapt and the Queryable Company

- Claude Killed the API Key

- Memory Outside the Tree

- Sinapt: One Cockpit for the AI-Native Operator (yesterday's piece — the foundation)